Information Theory - Part 5: Everything or Overhyped?

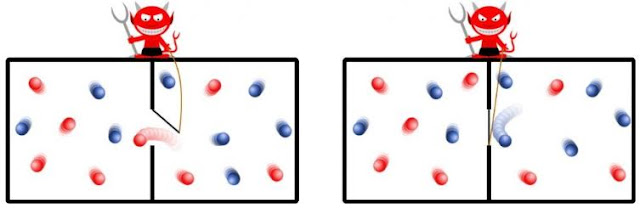

The most famous thought experiment in quantum theory, Schrodinger’s cat, raised a highly problematic question: At what size does the weirdness of quantum phenomenon give way to the “normal” behavior we observe all the time? Nothing in the maths of quantum theory put a size limit. Nor does the maths explain what constitutes an “observation” of a quantum entity. Could only living entities could make an observation? Or did instruments count too? The accepted answer today is something called decoherence, writes Charles Seife in Decoding the Universe . “Decoherence” refers to any interaction between two items in the universe (light, matter, anything else). Every such interaction constitutes a measurement made by nature. An extraction of (there’s that word again) information. The tinier or colder or more isolated something is, the longer it can stay without interacting with any other piece of nature, i.e., the longer it takes before another part of nature can “measure” it. But ev...